Why Glitched

A glitch in a system is not always a bug. Sometimes it is the system revealing that its assumptions were wrong all along.

The AI revolution is doing exactly that to the labor market. The glitch is not automation. The glitch is the assumption that careers are linear, that expertise compounds safely, that the path you built over a decade is structurally sound. That assumption is failing for a lot of people right now, and it is going to fail for a lot more.

Maybe your role is already gone. Maybe you can see the signals and want to get ahead of them. Maybe you are just curious about where your career could go, or you want to support someone navigating this. The tool does not require a crisis to be useful. It just requires a willingness to think clearly about what comes next. That is what glitched.sh is for.

That kind of thinking, outside the expected parameters of a stable linear career, is not a defect. It is often where the most useful things get built.

Glitched came from the intersection of building AWAF and a lot of real conversations. Friends, colleagues, professionals who are quietly anxious about where their careers are going and not sure what to do with that anxiety. The question kept coming up: not "am I safe right now" but "how do I actually think through this clearly." AWAF gave me a framework for reasoning about agent systems. Those conversations gave me the problem worth building for.

What It Does

The intake is deliberately short. Three questions. The agent does the rest.

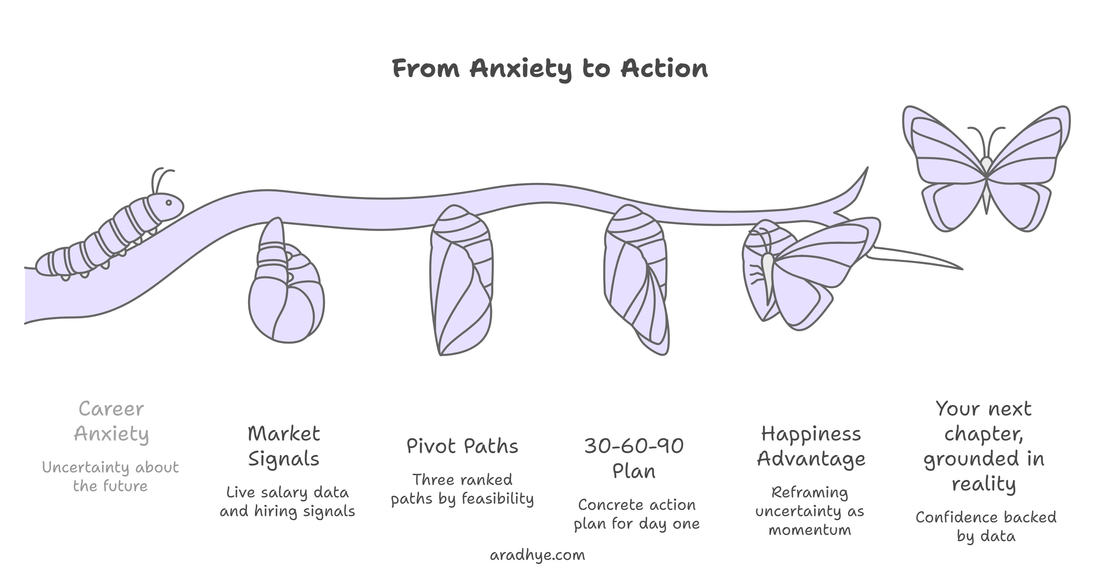

You tell it your background, your constraints, and where you want to go. What comes back is not advice. It is a structured pivot plan: three realistic paths ranked by feasibility, a 30-60-90 day action plan for the path you choose, and a framing layer built around the Happiness Advantage.

Here is the part most people underestimate. The paths glitched surfaces are grounded in live market signals: what roles are actually hiring, what skills the market is paying a premium for right now, what salary bands are realistic for someone with your background making a pivot in a given direction. The MarketAgent pulls this and enriches your profile before a single recommendation is generated.

This is work you could theoretically do yourself. You could spend a weekend crawling job boards, reading salary surveys, cross-referencing your skills against current postings, and prompting an LLM repeatedly to try to get something useful out. Most people do not do this, not because they are lazy, but because the signals are scattered, it is time-consuming, and by the time you have assembled a picture it is already partly stale. Glitched does it every session, in the background, before you see anything. You get the synthesis, not the homework.

The framing layer draws on Shawn Achor's research from his book The Happiness Advantage. Achor spent over a decade at Harvard studying the relationship between happiness and performance and found that the conventional formula is backwards. Most people believe success leads to happiness. His research showed the opposite: positive mindset precedes and drives performance. Brains in a positive state are measurably more creative, more resilient, and more productive than brains operating from stress or neutral. Whether the disruption has already happened to you or you are anticipating it, the instinct is to treat uncertainty as a problem to escape from, which is exactly the wrong mode for thinking clearly about what comes next. The HappinessAgent does not just generate a plan. It reframes the situation. The uncertainty is real. So is the forward momentum it creates, if you can see it that way.

The UI is at github.com/YogirajA/glitched-ui.

How It Works

Here is the architecture, layer by layer:

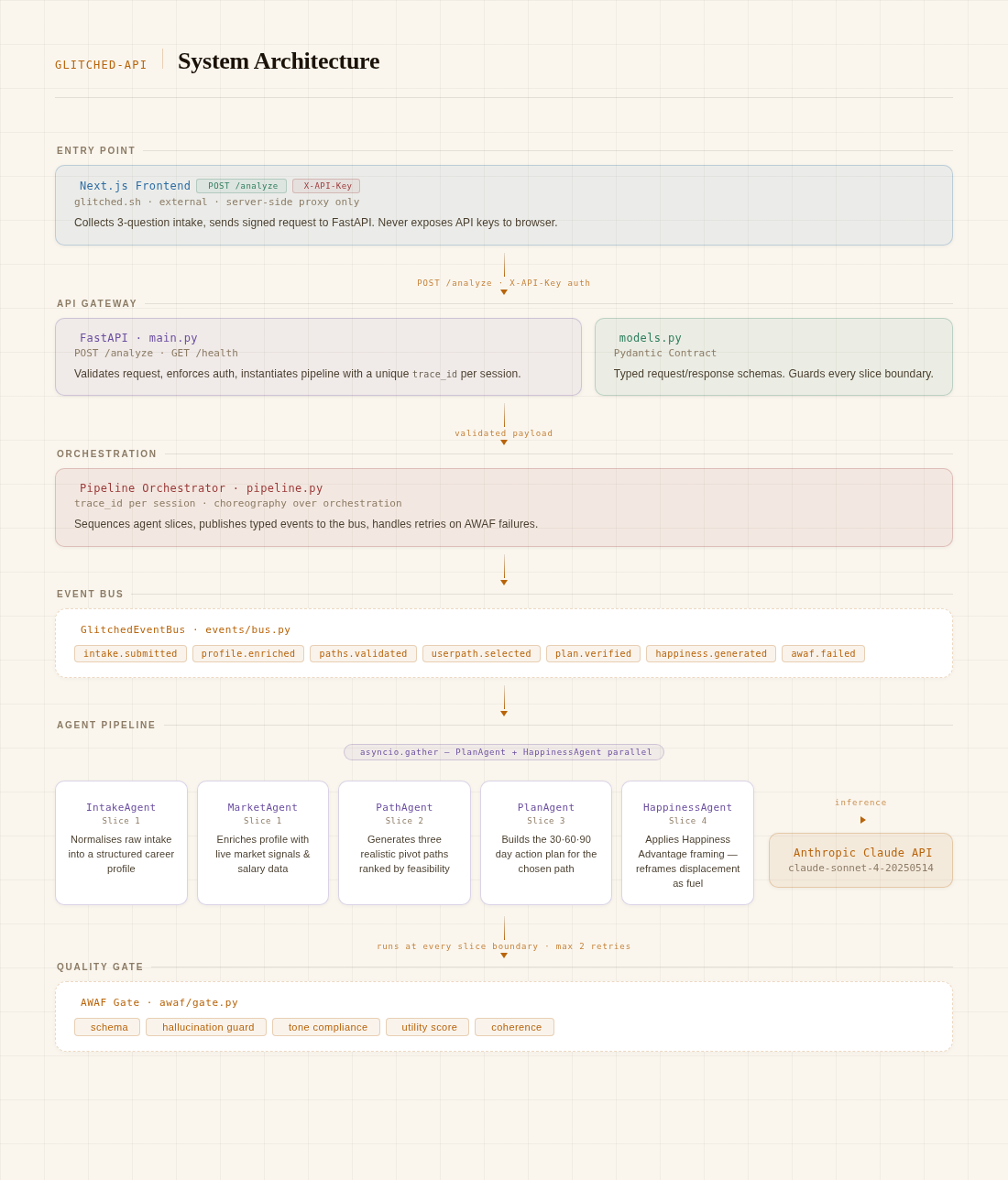

Entry Point

A Next.js frontend collects the three-question intake and sends a signed POST to the backend. The API key never touches the browser. Server-side proxy only. Not optional hygiene, table stakes.

API Gateway

FastAPI handles POST /analyze and GET /health. Every request gets a unique trace_id per session. Pydantic models in models.py guard every slice boundary. If the shape is wrong, it fails fast before anything expensive runs.

Orchestration

pipeline.py sequences the agent slices and publishes typed events to an internal event bus. It handles retries on AWAF failures. The pipeline knows the order. The agents know their job. Nothing else.

Event Bus

GlitchedEventBus in events/bus.py carries named events across the pipeline:

intake.submitted → profile.enriched → paths.validated → userpath.selected → plan.verified → happiness.generated → awaf.failed

That last one is not a failure state. It is a signal. When AWAF catches something wrong at a slice boundary, the bus knows about it.

Agent Pipeline

Five agents run across two execution slices. Slice 1 runs IntakeAgent and MarketAgent in parallel via asyncio.gather. The IntakeAgent normalises raw intake into a structured career profile. The MarketAgent enriches it with live market signals and salary data. Both must complete before Slice 2 begins.

Slice 2 runs PathAgent, PlanAgent, and HappinessAgent sequentially. PathAgent generates three realistic pivot paths ranked by feasibility. PlanAgent builds the 30-60-90 day action plan. HappinessAgent applies the reframe.

All inference goes through the Anthropic Claude API (claude-sonnet-4-20250514).

Quality Gate

Every slice boundary runs through awaf/gate.py. Schema validity, hallucination guard, tone compliance, utility score, coherence. Max two retries. If it still fails, the event bus gets the awaf.failed signal and the pipeline handles the degradation. This is not cosmetic. The quality gate is doing real work on every agent output before anything moves downstream.

How Well: The AWAF Score

I ran the AWAF assessment against glitched.sh using the awaf-skill. The score came back at 52. Moderate risk.

The architecture earned its marks in the right places. The Foundation pillars score well. The quality gate at every slice boundary is a legitimate implementation of Reasoning Integrity. The event bus with named typed events is a clean Controllability signal. The Pydantic contracts at every boundary are doing exactly what Context Integrity requires at the schema layer.

The gaps are just as real. No defined SLOs. Observability is thin beyond the trace ID. The MarketAgent has no circuit breaker for when external salary data is unavailable. The retry logic does not distinguish transient failures from structural ones.

52 is honest. Architecturally intentional, operationally immature. That is exactly where this system is right now.

What's Next

The next run is through awaf-cli, the command-line implementation of AWAF as a model-agnostic tool. It supports Anthropic, OpenAI, Azure OpenAI, Google Gemini, and LiteLLM as a catch-all. Bring your own model and key. Ten concurrent pillar agents. No shared state between evaluations. I will publish the full pillar breakdown when that run is complete.

Beyond the assessment, the system itself is going to expand. The current version is a pipeline. The next version will feel like a conversation. An agent that can push back on assumptions in your intake, ask clarifying questions when paths are genuinely ambiguous, and surface market signals mid-session so you can react to them in real time. The interactivity layer is coming too: explore pivot paths before committing, adjust the action plan in conversation with the agent, see the reasoning behind the recommendations rather than just the output.

The AWAF score will track with that. As the system gets more capable, the assessment gets harder to pass. That is the point.

More agentic. More interactive. Higher score. That is the roadmap.

The spec is at github.com/YogirajA/AWAF. The CLI is at github.com/YogirajA/awaf-cli. Both are open.

glitched.sh is open source. If you are working on something in this space or want to run AWAF against your own agent system, reach out.

Comments