A question that comes up constantly in Claude Code enablement conversations: why bother with context hygiene at all? Session getting long, model acting strange, output drifting? Just blow it up. Hit /clear, start fresh, move on.

It is a reasonable instinct. And it is usually the wrong move.

What Actually Happens When You Run Each Command

/clear wipes the conversation entirely. Zero context, zero history, zero memory of what you were building.

/compact does something more deliberate. It first clears the oldest tool outputs, then compresses what remains into a structured summary, keeping what matters: the files you touched, the decisions you made, the current state of the task, the errors you worked through. The token count drops dramatically. The useful information survives.

That difference matters more than most people account for.

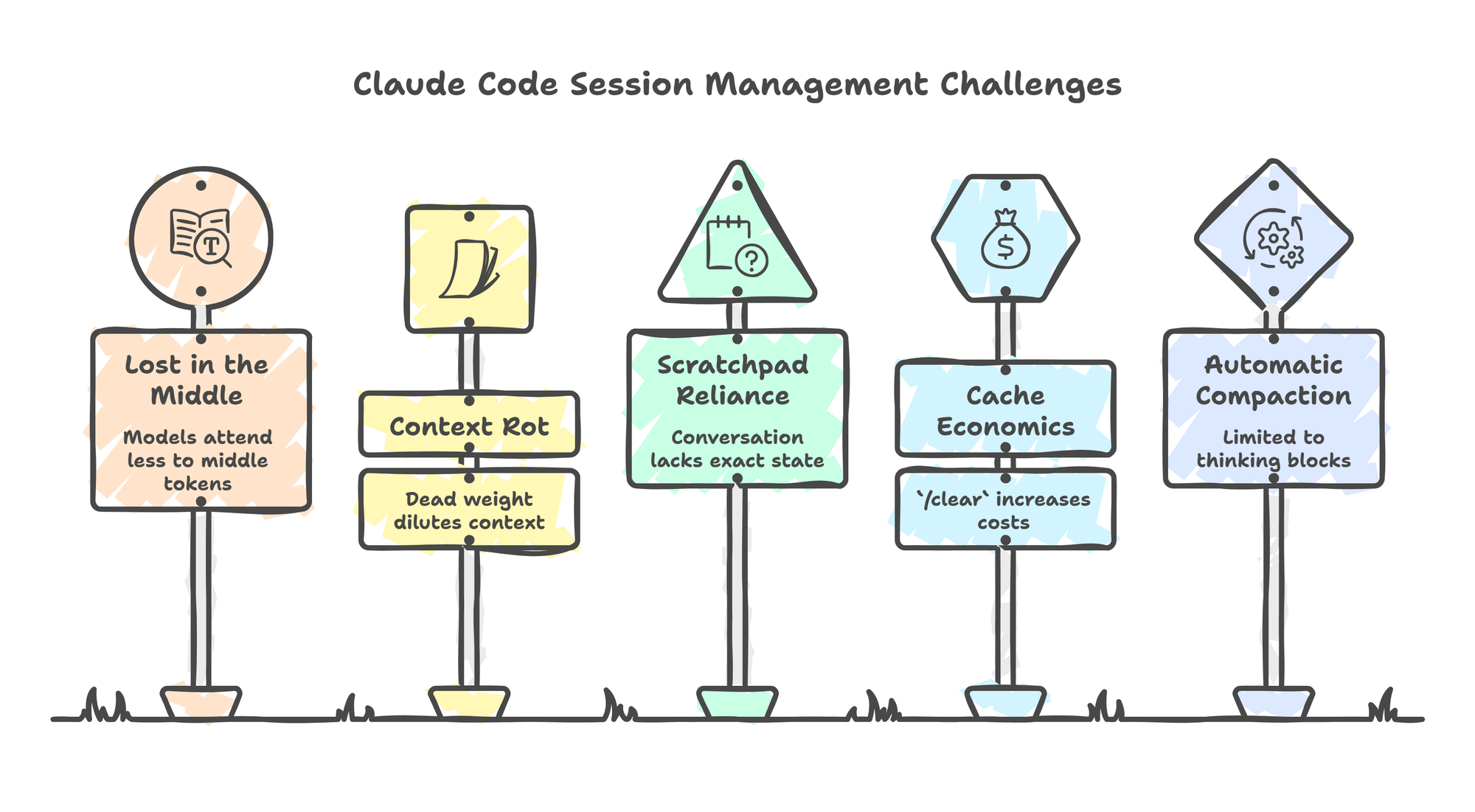

The Lost in the Middle Problem

Language models do not treat all tokens equally. Research shows that models attend more strongly to tokens at the beginning and end of the context window, and less reliably to what sits in the middle. This is the "lost in the middle" effect, and it is a real limitation in production agentic workflows.

When a session grows long, your most important context is buried in the middle of a dense transcript. The architectural decisions from hour one. The constraints you established. The file you were editing before the last three tangents. The model has access to it technically. Its influence on generation weakens practically.

/compact collapses that sprawl into a tight summary at the top of a fresh context. The important things get front-loaded. The noise gets dropped.

/clear also solves the problem. It solves it by throwing out everything.

Context Rot Is Real, Even With 1M Tokens

Here is what surprises most people: a large context window does not protect you from degradation.

You can be at 40% utilization, hundreds of thousands of tokens remaining, well within technical limits, and still notice output quietly getting worse. Responses become slightly less precise. The model starts making assumptions it would not have made earlier. Subtle drift creeps in.

This is context rot. Anthropic's own docs now use this exact term: as token count grows, accuracy and recall degrade. It is not about running out of space. It is about what happens to the signal-to-noise ratio over time.

A long session accumulates dead weight: abandoned approaches, superseded file versions, exploratory tangents, intermediate error messages that were useful in the moment and meaningless now. None of that gets garbage-collected. It just sits there, diluting the context the model is reasoning over.

In practice you can start seeing this after roughly 30 minutes of sustained work, even with plenty of context room left. The issue is not capacity. It is staleness.

Think of it like a cup of ice cream. You would not enjoy a scoop that has been sitting out for 30 minutes, no matter how good it was when it was fresh. But you are also not trying to shove a frozen solid block down all at once and give yourself brain freeze. You want it at the right temperature, at a manageable pace. Too stale and quality degrades. Too much at once and the model is overwhelmed. /compact is the equivalent of refreshing your cup while keeping the flavor.

It produces a summary that reflects the current state of things. Not the archaeological record of how you got there.

Scratchpads for Exact State

If precision matters, do not rely on the conversation to hold exact state over time.

Write it to a file explicitly. Have Claude maintain a SCRATCH.md or task-specific file as the source of truth. When you /compact, the summary will reference the file rather than reconstruct its contents from conversational memory.

This matters most for exact syntax: YAML, JSON, environment configs, CLAUDE.md files themselves. A compacted summary captures intent and structure. It may soften the edges on exact values. The file does not have that problem.

The conversation is the reasoning layer. The file is the memory layer. Treat them accordingly.

The Cache Economics Angle

This one matters more at scale than most practitioners account for.

Anthropic's API uses prompt caching to reduce cost on repeated prefixes. Cache hits are billed at a fraction of standard input rate. The real cost driver on any turn is how many input tokens are NOT cached.

When you run /clear, the next turn shares no prefix with the prior session. Cache hits drop to zero. Full price on total input, every turn, until a new cache builds up under the fresh prefix.

With /compact, the summarized context becomes the new cached prefix. Subsequent turns benefit from cache reads on that summary. You are paying for the delta on each turn, not the full load every time.

For individual use this is a minor consideration. For teams running Claude Code at volume, the difference compounds. The full math on this (write multipliers, TTL tradeoffs, when caching actually wins) deserves its own post on the tokenomics of long-running agents. The directional point is enough for now: /compact keeps you on the cached path. /clear does not.

A Related Mechanism: How Thinking Blocks Stay Cheap

There is one more piece worth understanding.

When Claude generates thinking blocks, those blocks are billed as output tokens once, at generation. On subsequent turns they are excluded from the input context calculation:

context_window = (input_tokens - previous_thinking_tokens) + current_turn_tokens

You pay for thinking once. Then it drops out. Turn 5 does not carry the reasoning Claude did on turn 1.

This is automatic compaction for one specific category of tokens.

But the thinking-block mechanism is automatic and narrowly scoped. Tool calls, tool results, file reads, user messages, and assistant responses still accumulate until Claude Code's auto-compact kicks in at the limit. /compact lets you take that step on your own terms, before the limit forces it.

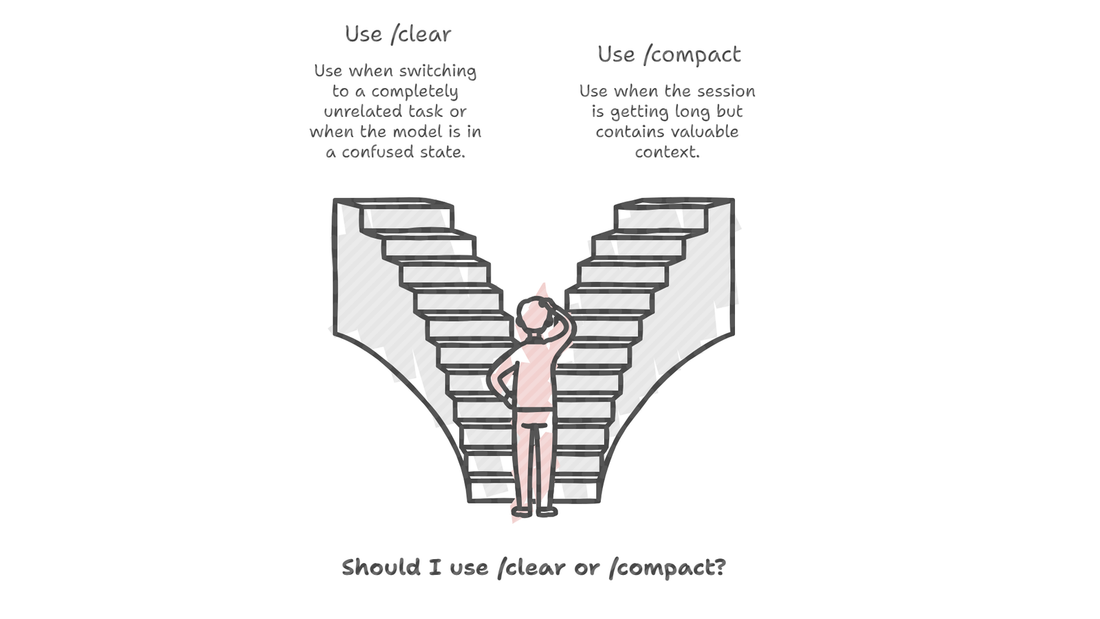

When /clear Actually Makes Sense

/clear is not useless. There are legitimate situations for it.

Use it when switching to a completely unrelated task. Use it when the model has entered a confused or looping state that compaction is unlikely to fix. Use it when you want zero carryover into a new workstream.

But "the session is getting long" is not a good enough reason. That is exactly the situation /compact was built for.

One Question Before You Clear

Before you hit /clear: is there anything in this session you would have to re-explain if you started fresh?

If yes, run /compact first. If the compacted session still feels unwieldy or the model is still behaving strangely, then clear it.

Most of the time, compact is enough. And most of the time, the context you thought was slowing you down was actually the context you needed.

References

- Context windows — Anthropic docs. Source of the thinking blocks formula.

- Extended thinking — Anthropic docs. How thinking blocks are billed and stripped between turns.

- Pricing and prompt caching — Anthropic docs. Cache read and write rates.

- How Claude Code works — Anthropic docs. Auto-compact and context management behavior.

- Slash commands in the SDK — Anthropic docs.

/compactand/clearreference. - Lost in the Middle: How Language Models Use Long Contexts — Liu et al., TACL 2024. The original research on positional attention degradation.

Comments